face-api.js

JavaScript face recognition API for the browser and nodejs implemented on top of tensorflow.js core (tensorflow/tfjs-core)

Click me for Live Demos!

Tutorials

- face-api.js — JavaScript API for Face Recognition in the Browser with tensorflow.js

- Realtime JavaScript Face Tracking and Face Recognition using face-api.js’ MTCNN Face Detector

- Realtime Webcam Face Detection And Emotion Recognition - Video

- Easy Face Recognition Tutorial With JavaScript - Video

- Using face-api.js with Vue.js and Electron

- Add Masks to People - Gant Laborde on Learn with Jason

Table of Contents

- Features

- Running the Examples

- face-api.js for the Browser

- face-api.js for Nodejs

- Usage

- Available Models

- API Documentation

Features

Face Recognition

Face Landmark Detection

Face Expression Recognition

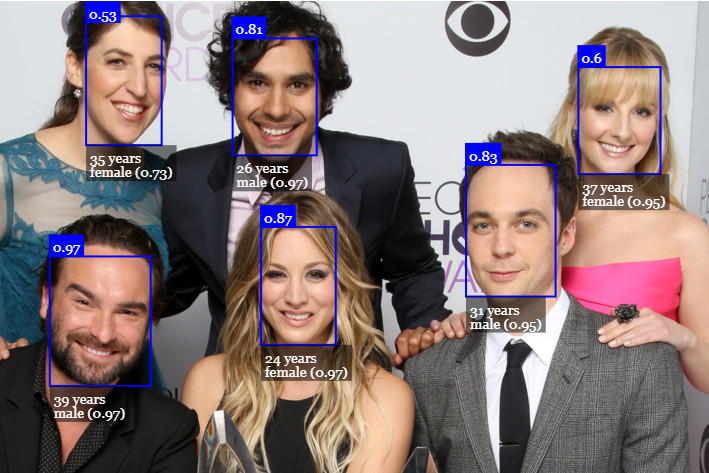

Age Estimation & Gender Recognition

Running the Examples

Clone the repository:

git clone https://github.com/justadudewhohacks/face-api.js.git

Running the Browser Examples

cd face-api.js/examples/examples-browsernpm inpm start

Browse to http://localhost:3000/.

Running the Nodejs Examples

cd face-api.js/examples/examples-nodejsnpm i

Now run one of the examples using ts-node:

ts-node faceDetection.ts

Or simply compile and run them with node:

tsc faceDetection.tsnode faceDetection.js

face-api.js for the Browser

Simply include the latest script from dist/face-api.js.

Or install it via npm:

npm i face-api.js

face-api.js for Nodejs

We can use the equivalent API in a nodejs environment by polyfilling some browser specifics, such as HTMLImageElement, HTMLCanvasElement and ImageData. The easiest way to do so is by installing the node-canvas package.

Alternatively you can simply construct your own tensors from image data and pass tensors as inputs to the API.

Furthermore you want to install @tensorflow/tfjs-node (not required, but highly recommended), which speeds things up drastically by compiling and binding to the native Tensorflow C++ library:

npm i face-api.js canvas @tensorflow/tfjs-node

Now we simply monkey patch the environment to use the polyfills:

// import nodejs bindings to native tensorflow,// not required, but will speed up things drastically (python required);// implements nodejs wrappers for HTMLCanvasElement, HTMLImageElement, ImageData;;// patch nodejs environment, we need to provide an implementation of// HTMLCanvasElement and HTMLImageElementconst Canvas Image ImageData = canvasfaceapienv

Getting Started

Loading the Models

All global neural network instances are exported via faceapi.nets:

console// ageGenderNet// faceExpressionNet// faceLandmark68Net// faceLandmark68TinyNet// faceRecognitionNet// ssdMobilenetv1// tinyFaceDetector// tinyYolov2

To load a model, you have to provide the corresponding manifest.json file as well as the model weight files (shards) as assets. Simply copy them to your public or assets folder. The manifest.json and shard files of a model have to be located in the same directory / accessible under the same route.

Assuming the models reside in public/models:

await faceapinetsssdMobilenetv1// accordingly for the other models:// await faceapi.nets.faceLandmark68Net.loadFromUri('/models')// await faceapi.nets.faceRecognitionNet.loadFromUri('/models')// ...

In a nodejs environment you can furthermore load the models directly from disk:

await faceapinetsssdMobilenetv1

You can also load the model from a tf.NamedTensorMap:

await faceapinetsssdMobilenetv1

Alternatively, you can also create own instances of the neural nets:

const net =await net

You can also load the weights as a Float32Array (in case you want to use the uncompressed models):

// using fetchnet// using axiosconst res = await axiosconst weights = resdatanet

High Level API

In the following input can be an HTML img, video or canvas element or the id of that element.

const input = document// const input = document.getElementById('myVideo')// const input = document.getElementById('myCanvas')// or simply:// const input = 'myImg'

Detecting Faces

Detect all faces in an image. Returns Array<FaceDetection>:

const detections = await faceapi

Detect the face with the highest confidence score in an image. Returns FaceDetection | undefined:

const detection = await faceapi

By default detectAllFaces and detectSingleFace utilize the SSD Mobilenet V1 Face Detector. You can specify the face detector by passing the corresponding options object:

const detections1 = await faceapiconst detections2 = await faceapi

You can tune the options of each face detector as shown here.

Detecting 68 Face Landmark Points

After face detection, we can furthermore predict the facial landmarks for each detected face as follows:

Detect all faces in an image + computes 68 Point Face Landmarks for each detected face. Returns Array<WithFaceLandmarks<WithFaceDetection<{}>>>:

const detectionsWithLandmarks = await faceapi

Detect the face with the highest confidence score in an image + computes 68 Point Face Landmarks for that face. Returns WithFaceLandmarks<WithFaceDetection<{}>> | undefined:

const detectionWithLandmarks = await faceapi

You can also specify to use the tiny model instead of the default model:

const useTinyModel = trueconst detectionsWithLandmarks = await faceapi

Computing Face Descriptors

After face detection and facial landmark prediction the face descriptors for each face can be computed as follows:

Detect all faces in an image + compute 68 Point Face Landmarks for each detected face. Returns Array<WithFaceDescriptor<WithFaceLandmarks<WithFaceDetection<{}>>>>:

const results = await faceapi

Detect the face with the highest confidence score in an image + compute 68 Point Face Landmarks and face descriptor for that face. Returns WithFaceDescriptor<WithFaceLandmarks<WithFaceDetection<{}>>> | undefined:

const result = await faceapi

Recognizing Face Expressions

Face expression recognition can be performed for detected faces as follows:

Detect all faces in an image + recognize face expressions of each face. Returns Array<WithFaceExpressions<WithFaceLandmarks<WithFaceDetection<{}>>>>:

const detectionsWithExpressions = await faceapi

Detect the face with the highest confidence score in an image + recognize the face expressions for that face. Returns WithFaceExpressions<WithFaceLandmarks<WithFaceDetection<{}>>> | undefined:

const detectionWithExpressions = await faceapi

You can also skip .withFaceLandmarks(), which will skip the face alignment step (less stable accuracy):

Detect all faces without face alignment + recognize face expressions of each face. Returns Array<WithFaceExpressions<WithFaceDetection<{}>>>:

const detectionsWithExpressions = await faceapi

Detect the face with the highest confidence score without face alignment + recognize the face expression for that face. Returns WithFaceExpressions<WithFaceDetection<{}>> | undefined:

const detectionWithExpressions = await faceapi

Age Estimation and Gender Recognition

Age estimation and gender recognition from detected faces can be done as follows:

Detect all faces in an image + estimate age and recognize gender of each face. Returns Array<WithAge<WithGender<WithFaceLandmarks<WithFaceDetection<{}>>>>>:

const detectionsWithAgeAndGender = await faceapi

Detect the face with the highest confidence score in an image + estimate age and recognize gender for that face. Returns WithAge<WithGender<WithFaceLandmarks<WithFaceDetection<{}>>>> | undefined:

const detectionWithAgeAndGender = await faceapi

You can also skip .withFaceLandmarks(), which will skip the face alignment step (less stable accuracy):

Detect all faces without face alignment + estimate age and recognize gender of each face. Returns Array<WithAge<WithGender<WithFaceDetection<{}>>>>:

const detectionsWithAgeAndGender = await faceapi

Detect the face with the highest confidence score without face alignment + estimate age and recognize gender for that face. Returns WithAge<WithGender<WithFaceDetection<{}>>> | undefined:

const detectionWithAgeAndGender = await faceapi

Composition of Tasks

Tasks can be composed as follows:

// all facesawait faceapiawait faceapiawait faceapiawait faceapiawait faceapiawait faceapiawait faceapi// single faceawait faceapiawait faceapiawait faceapiawait faceapiawait faceapiawait faceapiawait faceapi

Face Recognition by Matching Descriptors

To perform face recognition, one can use faceapi.FaceMatcher to compare reference face descriptors to query face descriptors.

First, we initialize the FaceMatcher with the reference data, for example we can simply detect faces in a referenceImage and match the descriptors of the detected faces to faces of subsequent images:

const results = await faceapiif !resultslengthreturn// create FaceMatcher with automatically assigned labels// from the detection results for the reference imageconst faceMatcher = results

Now we can recognize a persons face shown in queryImage1:

const singleResult = await faceapiif singleResultconst bestMatch = faceMatcherconsole

Or we can recognize all faces shown in queryImage2:

const results = await faceapiresults

You can also create labeled reference descriptors as follows:

const labeledDescriptors ='obama'descriptorObama1 descriptorObama2'trump'descriptorTrumpconst faceMatcher = labeledDescriptors

Displaying Detection Results

Preparing the overlay canvas:

const displaySize = width: inputwidth height: inputheight// resize the overlay canvas to the input dimensionsconst canvas = documentfaceapi

face-api.js predefines some highlevel drawing functions, which you can utilize:

/* Display detected face bounding boxes */const detections = await faceapi// resize the detected boxes in case your displayed image has a different size than the originalconst resizedDetections = faceapi// draw detections into the canvasfaceapidraw/* Display face landmarks */const detectionsWithLandmarks = await faceapi// resize the detected boxes and landmarks in case your displayed image has a different size than the originalconst resizedResults = faceapi// draw detections into the canvasfaceapidraw// draw the landmarks into the canvasfaceapidraw/* Display face expression results */const detectionsWithExpressions = await faceapi// resize the detected boxes and landmarks in case your displayed image has a different size than the originalconst resizedResults = faceapi// draw detections into the canvasfaceapidraw// draw a textbox displaying the face expressions with minimum probability into the canvasconst minProbability = 005faceapidraw

You can also draw boxes with custom text (DrawBox):

const box = x: 50 y: 50 width: 100 height: 100// see DrawBoxOptions belowconst drawOptions =label: 'Hello I am a box!'lineWidth: 2const drawBox = box drawOptionsdrawBox

DrawBox drawing options:

Finally you can draw custom text fields (DrawTextField):

const text ='This is a textline!''This is another textline!'const anchor = x: 200 y: 200// see DrawTextField belowconst drawOptions =anchorPosition: 'TOP_LEFT'backgroundColor: 'rgba(0, 0, 0, 0.5)'const drawBox = text anchor drawOptionsdrawBox

DrawTextField drawing options:

Face Detection Options

SsdMobilenetv1Options

// exampleconst options = minConfidence: 08

TinyFaceDetectorOptions

// exampleconst options = inputSize: 320

Utility Classes

IBox

IFaceDetection

IFaceLandmarks

WithFaceDetection

WithFaceLandmarks

WithFaceDescriptor

WithFaceExpressions

WithAge

WithGender

Other Useful Utility

Using the Low Level API

Instead of using the high level API, you can directly use the forward methods of each neural network:

const detections1 = await faceapiconst detections2 = await faceapiconst landmarks1 = await faceapiconst landmarks2 = await faceapiconst descriptor = await faceapi

Extracting a Canvas for an Image Region

const regionsToExtract =0 0 100 100// actually extractFaces is meant to extract face regions from bounding boxes// but you can also use it to extract any other regionconst canvases = await faceapi

Euclidean Distance

// ment to be used for computing the euclidean distance between two face descriptorsconst dist = faceapiconsole // 10

Retrieve the Face Landmark Points and Contours

const landmarkPositions = landmarkspositions// or get the positions of individual contours,// only available for 68 point face ladnamrks (FaceLandmarks68)const jawOutline = landmarksconst nose = landmarksconst mouth = landmarksconst leftEye = landmarksconst rightEye = landmarksconst leftEyeBbrow = landmarksconst rightEyeBrow = landmarks

Fetch and Display Images from an URL

const image = await faceapiconsole // true// displaying the fetched image contentconst myImg = documentmyImgsrc = imagesrc

Fetching JSON

const json = await faceapi

Creating an Image Picker

{const imgFile = documentfiles0// create an HTMLImageElement from a Blobconst img = await faceapidocumentsrc = imgsrc}

Creating a Canvas Element from an Image or Video Element

const canvas1 = faceapiconst canvas2 = faceapi

Available Models

Face Detection Models

SSD Mobilenet V1

For face detection, this project implements a SSD (Single Shot Multibox Detector) based on MobileNetV1. The neural net will compute the locations of each face in an image and will return the bounding boxes together with it's probability for each face. This face detector is aiming towards obtaining high accuracy in detecting face bounding boxes instead of low inference time. The size of the quantized model is about 5.4 MB (ssd_mobilenetv1_model).

The face detection model has been trained on the WIDERFACE dataset and the weights are provided by yeephycho in this repo.

Tiny Face Detector

The Tiny Face Detector is a very performant, realtime face detector, which is much faster, smaller and less resource consuming compared to the SSD Mobilenet V1 face detector, in return it performs slightly less well on detecting small faces. This model is extremely mobile and web friendly, thus it should be your GO-TO face detector on mobile devices and resource limited clients. The size of the quantized model is only 190 KB (tiny_face_detector_model).

The face detector has been trained on a custom dataset of ~14K images labeled with bounding boxes. Furthermore the model has been trained to predict bounding boxes, which entirely cover facial feature points, thus it in general produces better results in combination with subsequent face landmark detection than SSD Mobilenet V1.

This model is basically an even tinier version of Tiny Yolo V2, replacing the regular convolutions of Yolo with depthwise separable convolutions. Yolo is fully convolutional, thus can easily adapt to different input image sizes to trade off accuracy for performance (inference time).

68 Point Face Landmark Detection Models

This package implements a very lightweight and fast, yet accurate 68 point face landmark detector. The default model has a size of only 350kb (face_landmark_68_model) and the tiny model is only 80kb (face_landmark_68_tiny_model). Both models employ the ideas of depthwise separable convolutions as well as densely connected blocks. The models have been trained on a dataset of ~35k face images labeled with 68 face landmark points.

Face Recognition Model

For face recognition, a ResNet-34 like architecture is implemented to compute a face descriptor (a feature vector with 128 values) from any given face image, which is used to describe the characteristics of a persons face. The model is not limited to the set of faces used for training, meaning you can use it for face recognition of any person, for example yourself. You can determine the similarity of two arbitrary faces by comparing their face descriptors, for example by computing the euclidean distance or using any other classifier of your choice.

The neural net is equivalent to the FaceRecognizerNet used in face-recognition.js and the net used in the dlib face recognition example. The weights have been trained by davisking and the model achieves a prediction accuracy of 99.38% on the LFW (Labeled Faces in the Wild) benchmark for face recognition.

The size of the quantized model is roughly 6.2 MB (face_recognition_model).

Face Expression Recognition Model

The face expression recognition model is lightweight, fast and provides reasonable accuracy. The model has a size of roughly 310kb and it employs depthwise separable convolutions and densely connected blocks. It has been trained on a variety of images from publicly available datasets as well as images scraped from the web. Note, that wearing glasses might decrease the accuracy of the prediction results.

Age and Gender Recognition Model

The age and gender recognition model is a multitask network, which employs a feature extraction layer, an age regression layer and a gender classifier. The model has a size of roughly 420kb and the feature extractor employs a tinier but very similar architecture to Xception.

This model has been trained and tested on the following databases with an 80/20 train/test split each: UTK, FGNET, Chalearn, Wiki, IMDB*, CACD*, MegaAge, MegaAge-Asian. The * indicates, that these databases have been algorithmically cleaned up, since the initial databases are very noisy.

Total Test Results

Total MAE (Mean Age Error): 4.54

Total Gender Accuracy: 95%

Test results for each database

The - indicates, that there are no gender labels available for these databases.

| Database | UTK | FGNET | Chalearn | Wiki | IMDB* | CACD* | MegaAge | MegaAge-Asian |

|---|---|---|---|---|---|---|---|---|

| MAE | 5.25 | 4.23 | 6.24 | 6.54 | 3.63 | 3.20 | 6.23 | 4.21 |

| Gender Accuracy | 0.93 | - | 0.94 | 0.95 | - | 0.97 | - | - |

Test results for different age category groups

| Age Range | 0 - 3 | 4 - 8 | 9 - 18 | 19 - 28 | 29 - 40 | 41 - 60 | 60 - 80 | 80+ |

|---|---|---|---|---|---|---|---|---|

| MAE | 1.52 | 3.06 | 4.82 | 4.99 | 5.43 | 4.94 | 6.17 | 9.91 |

| Gender Accuracy | 0.69 | 0.80 | 0.88 | 0.96 | 0.97 | 0.97 | 0.96 | 0.9 |